David Mimno is an Associate Professor and Chair of the Department of Information Science in the Ann S. Bowers College of Computing and Information Science at Cornell University. He holds a Ph.D. from UMass Amherst and was previously the head programmer at the Perseus Project at Tufts as well as a researcher at Princeton University. Professor Mimno’s work has been supported by the Sloan Foundation, the NEH, and the NSF.

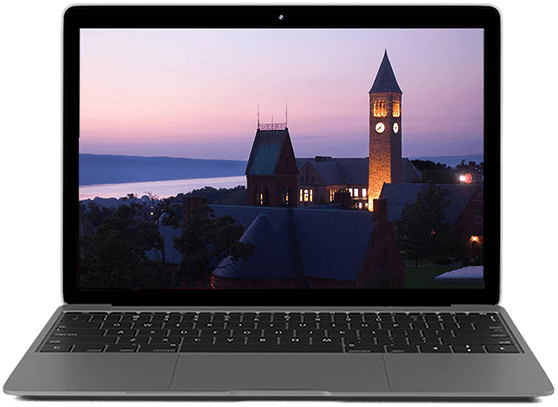

Large Language Model FundamentalsCornell Certificate Program

Overview and Courses

In today's rapidly evolving digital landscape, large language models (LLMs) are revolutionizing the way we work, write, research, and make decisions. While AI breakthroughs capture headlines, the true potential lies in effectively applying these powerful tools. This certificate program is tailored for professionals eager to harness LLM technologies and implement them immediately.

Throughout this hands-on program, you will acquire practical skills to evaluate, prompt, fine-tune, and deploy LLMs to address real-world challenges. You'll delve into major platforms and tools, including the Hugging Face API, and discover how to select the right model for your needs. Techniques such as preference learning and tokenization strategy will be explored to shape model behavior, alongside foundational concepts like probabilities and attention mechanisms.

Guided by Cornell Professor David Mimno, a leading expert in computational text analysis, this program demystifies LLMs and equips you to integrate them confidently into your workflows. Whether you're in business, education, policy, or research, this program is your roadmap to making AI work for you, starting now.

You will be most successful in this program if you have had some exposure to natural language processing or machine learning. You should also be comfortable with Python programming and basic statistics.

The courses in this certificate program are required to be completed in the order that they appear.

Course list

In this course, you will discover how to work directly with some of today's most powerful large language models (LLMs). You'll start by exploring online LLM-based systems and seeing how they handle tasks ranging from creative text generation to language translation. You'll compare how models from major organizations like OpenAI, Google, and Anthropic differ in their outputs and underlying philosophies.

You will then move beyond web interfaces to identify how to find and load various foundation models through the Hugging Face hub. By mastering Python scripts that retrieve and run these models locally, you'll gain deeper control over prompt engineering and understand how different model architectures respond to your requests. Finally, you'll tie all these skills together in hands-on projects where you generate text, analyze tokenization details, and assess outputs from multiple LLMs.

- Jul 1, 2026

- Sep 23, 2026

- Dec 16, 2026

- Mar 10, 2027

- Jun 2, 2027

In this course, you will use Python to quantify the next-word predictions of large language models (LLMs) and understand how these models assign probabilities to text. You'll compare raw scores from LLMs, transform them into probabilities, and explore uncertainty measures like entropy. You'll also build n-gram language models, handle unseen words, and interpret log probabilities to avoid numerical underflow.

By the end of this course, you will be able to evaluate entire sentences for their likelihood, implement your own model confidence checks, and decide when and how to suggest completions for real-world text applications.

You are required to have completed the following course or have equivalent experience before taking this course:

- LLM Tools, Platforms, and Prompts

- Apr 22, 2026

- Jul 15, 2026

- Oct 7, 2026

- Dec 30, 2026

- Mar 24, 2027

- Jun 16, 2027

In this course, you will discover how to adapt and refine large language models (LLMs) for tasks beyond their default capabilities by creating curated training sets, tweaking model parameters, and exploring cutting-edge approaches such as preference learning and low-rank adaptation (LoRA). You'll start by fine-tuning a base model using the Hugging Face API and analyzing common optimization strategies, including learning rate selection and gradient-based methods like AdaGrad and ADAM.

As you progress, you will evaluate your models with metrics that highlight accuracy, precision, and recall, then you'll extend your techniques to include pairwise preference optimization, which lets you incorporate direct user feedback into model improvements. Along the way, you'll see how instruction-tuned chatbots are built, practice customizing LLM outputs for specific tasks, and examine how to set up robust evaluation loops to measure success.

By the end of this course, you'll have a clear blueprint for building and honing specialized models that can handle diverse real-world applications.

You are required to have completed the following courses or have equivalent experience before taking this course:

- LLM Tools, Platforms, and Prompts

- Language Models and Next-Word Prediction

- May 6, 2026

- Jul 29, 2026

- Oct 21, 2026

- Jan 13, 2027

- Apr 7, 2027

- Jun 30, 2027

In this course, you will analyze how large language models are constructed from diverse text sources and examine the entire model life cycle, from pretraining data collection to generating meaningful outputs. You'll explore how choices about data type, genre, and tokenization affect a model's performance, discovering how to compare real-world corpora such as Wikipedia, Reddit, and GitHub.

Through hands-on projects, you will design tokenizers, quantify text characteristics, and apply methods like byte-pair encoding to see how different preprocessing strategies shape model capabilities. You'll also investigate how models interpret context by studying keywords in context (KWIC) views and embedding-based analysis.

By the end of this course, you will have a clear understanding of how data selection and processing decisions influence the way LLMs behave, preparing you to evaluate or improve existing models.

You are required to have completed the following courses or have equivalent experience before taking this course:

- LLM Tools, Platforms, and Prompts

- Language Models and Next-Word Prediction

- Fine-Tuning LLMs

- May 20, 2026

- Aug 12, 2026

- Nov 4, 2026

- Jan 27, 2027

- Apr 21, 2027

In this course, you will investigate the internal workings of transformer-based language models by exploring how embeddings, attention, and model architecture shape textual outputs. You'll begin by building a neural search engine that retrieves documents through vector similarity then move on to extracting token-level representations and visualizing attention patterns across different layers and heads.

As you progress, you will analyze how tokens interact with each other in a large language model (LLM), compare encoder-based architecture with decoder-based architectures, and trace how a single word's meaning can shift from input to output. By mastering techniques like plotting similarity matrices and identifying key influencers in the attention process, you'll gain insights enabling you to decode model behaviors and apply advanced strategies for more accurate, context-aware text generation.

You are required to have completed the following courses or have equivalent experience before taking this course:

- LLM Tools, Platforms, and Prompts

- Language Models and Next-Word Prediction

- Fine-Tuning LLMs

- Language Models and Language Data

- Jun 3, 2026

- Aug 26, 2026

- Nov 18, 2026

- Feb 10, 2027

- May 5, 2027

eCornell Online Workshops are live, interactive 3-hour learning experiences led by Cornell faculty experts. These premium short-format sessions focus on AI topics and are designed for busy professionals who want to gain immediately applicable skills and strategic perspectives. Workshops include faculty presentations, breakout discussions, and guided hands-on practice.

The AI Workshops All-Access Pass provides you with unlimited participation for 6 months from your date of purchase. Whether you choose to attend one workshop per month, or several per week, the All-Access Pass will allow you to customize your AI journey and stay on top of the latest AI trends.

Workshops cover a range of cutting-edge AI topics applicable across industries, hosted by Cornell faculty at the forefront of their fields. Whether you are just getting started with AI, seeking to build your AI skillset, or exploring advanced applications of AI, Workshops will provide you with an action-oriented learning experience for immediate application in your career. Sample Workshops include:

- Work Smarter with AI Agents: Individual and Team Effectiveness

- Leading AI Transformation: Bigger Than You Imagine, Harder Than You Expect

- Using AI at Work: Practical Choices and Better Results

- Search & Discoverability in the Era of AI

- Don't Just Prompt AI - Govern it

- AI-Powered Product Manager

- Leverage AI and Human Connection to Lead through Uncertainty

How It Works

- View slide #1

- View slide #2

- View slide #3

- View slide #4

- View slide #5

- View slide #6

- View slide #7

- View slide #8

Faculty Author

Key Course Takeaways

- Set up and utilize large language models for generating accurate text responses

- Apply probability concepts to interpret and compare model predictions

- Customize language models for specific tasks using guided instructions

- Explore the influence of text datasets and tokenization on model output

- Analyze how training data sources and preparation strategies shape model capabilities

- Examine advanced model structures like attention layers and word embeddings to understand language generation

What You'll Earn

- Large Language Model Fundamentals Certificate from Cornell’s Ann S. Bowers College of Computing and Information Science

- 80 Professional Development Hours (8 CEUs)

Watch the Video

Who Should Enroll

- Engineers

- Developers

- Analysts

- Data scientists

- AI engineers

- Entrepreneurs

- Data journalists

- Product managers

- Researchers

- Policymakers

- Legal professionals

Frequently Asked Questions

Large language models are already changing how teams write, research, code, and make decisions, but real value comes from knowing what these models are doing, how to evaluate their outputs, and how to adapt them responsibly for your use case. Cornell’s Large Language Model Fundamentals Certificate helps you move beyond surface-level prompting so you can work with modern LLMs with more confidence, precision, and control.

In this certificate program, authored by faculty from the Cornell Bowers College of Computing and Information Science, you will build practical capability with the tools and concepts that sit under everyday AI workflows, including model selection and comparison, prompt design, tokenization, next-word probabilities and uncertainty, fine-tuning approaches such as LoRA, and core ideas behind attention and embeddings. The experience is designed to be hands-on, with Python-based exercises and projects that reinforce what you learn through application.

If you want practical hands-on experience with modern LLM workflows, deeper skill in evaluating and improving model outputs, and a clearer understanding of how LLMs work under the hood, you should choose Cornell's Large Language Model Fundamentals Certificate.

Many online AI courses stop at high-level explanations or solo, self-paced videos. Cornell’s Large Language Model Fundamentals Certificate is built to help you practice the work you would actually do when evaluating and adapting LLMs then get feedback in a structured, cohort-based learning environment.

Key differences you can expect include:

- Faculty-designed curriculum that goes beyond tips and tricks into the mechanics that drive model behavior, including tokenization, probabilities, attention, and embeddings

- Applied projects that ask you to compare models, measure uncertainty, and tune behavior using real workflows such as fine-tuning and preference learning

- A small-cohort learning model (typically up to 35 learners) with expert facilitation, graded project feedback, and opportunities for live interaction that add support and accountability

- Tooling that reflects current practice, including working with open-source models through the Hugging Face ecosystem and running Python-based experiments in a guided environment

The result is a learning experience that stays practical while giving you a stronger foundation for making informed choices about model selection, evaluation, and adaptation in your organization.

Enrolling in Cornell’s Large Language Model Fundamentals Certificate also provides you with a 6-month All-Access Pass to eCornell's live online AI Workshops, interactive sessions led by world-class Cornell faculty that combine Ivy League insight with practical applications for busy professionals. Each 3-hour Workshop features structured instruction, guided practice, and real tools to build competitive AI capabilities, plus the opportunity to connect with a global cohort of growth-oriented peers. While AI Workshops are not required, they enhance certificate programs through:

- Integrating AI perspectives across most curricula

- Responding to emerging AI developments and trends

- Offering direct engagement with Cornell faculty at the forefront of AI research

Cornell’s Large Language Model Fundamentals Certificate is designed for professionals who want a working understanding of how modern LLMs generate text and how to evaluate and steer their behavior using real tools. The program is a strong fit if you work in roles such as engineering, data science, product management, analytics, research, entrepreneurship, data journalism, policy, or law and you want to engage with LLMs more rigorously than simple prompt-and-hope workflows.

You will be most successful if you:

- Feel comfortable writing and debugging Python

- Have some prior exposure to natural language processing or machine learning concepts

- Can work with basic statistics and interpret probabilities

Cornell’s Large Language Model Fundamentals Certificate program is especially useful if you need to compare model options, understand trade-offs in outputs, or build a more reliable approach to using LLMs for real tasks in your domain.

Across Cornell’s Large Language Model Fundamentals Certificate, projects are designed to make LLM behavior observable and measurable, using Python and widely used tooling so you can transfer what you learn to your own work.

In the Large Language Model Fundamentals Certificate, you can expect projects such as:

- Comparing how different commercial LLMs respond to the same task, then analyzing differences in outputs and limitations

- Loading and prompting open-source foundation models using the Hugging Face ecosystem and documenting model statistics from model cards

- Probing tokenization and generation controls to see how prompts become tokens and how decoding choices change results

- Measuring next-word probabilities, converting logits to probabilities, using entropy to quantify uncertainty, and implementing simple confidence-based heuristics

- Building an autocomplete-style prototype that only suggests completions when the model is sufficiently confident

- Fine-tuning a base model on a focused dataset, designing instruction-response training examples, and experimenting with efficient adaptation approaches such as LoRA

- Applying preference-learning concepts by structuring preferred versus rejected responses and observing how optimization changes model behavior

- Comparing text data sources and training tokenizers to see how dataset composition and preprocessing shape model coverage

- Creating an embedding-based retrieval workflow (a simple neural search engine), visualizing attention patterns, and tracing how token representations shift across model layers

These projects help you practice model evaluation, adaptation, and interpretation in a way that mirrors real LLM work, from selecting a model to validating whether it behaves the way you need.

Cornell's Large Language Model Fundamentals Certificate helps you build practical, defensible skill in evaluating and adapting large language models so you can use them more effectively in your role.

After completing the Large Language Model Fundamentals Certificate, you will be prepared to:

- Set up and utilize large language models for generating accurate text responses

- Apply probability concepts to interpret and compare model predictions

- Customize language models for specific tasks using guided instructions

- Explore the influence of text datasets and tokenization on model output

- Analyze how training data sources and preparation strategies shape model capabilities

- Examine advanced model structures like attention layers and word embeddings to understand language generation

Students often report that the program strengthens their confidence and day-to-day effectiveness by making advanced LLM topics feel approachable while still being deep enough to apply immediately. Learners frequently highlight hands-on labs with current tooling (including Hugging Face), experiments that reveal model trade-offs such as accuracy, bias, and output variability, and a steady progression from foundations to application that helps concepts stick. Many also say the program expanded how they think about language and AI, making them better prepared to discuss model behavior, limitations, and practical next steps with technical and non-technical stakeholders.

What truly sets eCornell apart is how our programs unlock genuine career transformation. Learners earn promotions to senior positions, enjoy meaningful salary growth, build valuable professional networks, and navigate successful career transitions.

Cornell’s Large Language Model Fundamentals Certificate, which consists of 5 short courses, is designed to be completed in 3 months. Each course runs for 2 weeks, with a typical weekly time commitment of 6 to 8 hours.

Flexibility comes from a blended online format:

- Most learning activities are asynchronous, so you can watch videos, complete readings, and work on assignments when it fits your schedule

- Live sessions are offered to support discussion and Q&A; attendance is encouraged but not required

- Clear weekly expectations and deadlines help you maintain momentum without needing to be online at set times for most of the course work

This structure is especially helpful if you want hands-on practice with Python and LLM tooling while still keeping control of when you do the work each week.

Students in Cornell’s Large Language Model Fundamentals Certificate often describe it as a clear, confidence-building entry point into how modern LLMs work, paired with hands-on practice that helps them apply concepts immediately in their own projects and workflows. They frequently highlight the way the program makes advanced topics feel approachable while still going deep enough to be genuinely useful.

What students tend to value most includes:

- A practical understanding of transformers, tokenization, and how LLMs generate text

- Hands-on labs using real tooling and workflows, including Hugging Face

- Experiments that compare model behavior and outputs to reveal accuracy, bias, and trade-offs

- Useful projects that reinforce concepts through applied work

- A strong blend of instruction and guided practice, not just theory

- Clear explanations at a steady pace, even for learners new to the topic

- Short, well-organized lessons that are easy to fit into busy schedules

- A flexible learning experience that supports self-paced progress

- Engaging multimedia modules that mix video, readings, and interactive exercises

- Up-to-date content and a learning experience that feels current and relevant

Many students also say Cornell’s Large Language Model Fundamentals Certificate program expanded how they think about language and AI, and that the structure helped them retain knowledge by moving from foundational concepts to practical application in a logical, well-supported sequence.

Comfort with Python is important because the program includes hands-on notebooks where you load models, inspect tokenization, compute probabilities, and run training or fine-tuning workflows. Cornell's Large Language Model Fundamentals Certificate is also best for learners who have had some exposure to natural language processing or machine learning, since you will work with ideas like logits, softmax probabilities, entropy, optimization, and evaluation metrics.

If you are earlier in your journey, the Large Language Model Fundamentals Certificate program can still be doable if you are ready to spend time debugging and practicing. A good self-check is whether you can read and modify short Python scripts, interpret basic statistics, and learn by experimenting with model outputs.

You will practice with tools that are widely used in modern LLM workflows, especially the Hugging Face ecosystem for finding models, reading model cards, loading models through an API, and running experiments in Python. You’ll also compare outputs from major commercial LLM systems to understand how model behavior differs across providers.

Cornell's Large Language Model Fundamentals Certificate is designed so you can focus on the modeling work rather than environment setup. Coding work is completed in a browser-based Jupyter environment, so you typically do not need to install Python locally. When you hit an error during coding exercises, an embedded Coding Coach can help you interpret error messages, and you can use discussions and facilitator support to troubleshoot.

Practical application is a core theme throughout Cornell's Large Language Model Fundamentals Certificate, even if your goal is not to ship a model to production. You will learn how to evaluate model outputs, quantify uncertainty, and choose between model options based on evidence rather than guesswork, which supports better decision making for AI adoption, procurement, governance discussions, and product planning.

You will also practice methods that transfer directly to real workflows, such as:

- Designing prompts and tests to compare model behavior across tasks

- Using confidence signals and probability concepts to decide when an LLM is likely to be reliable

- Adapting behavior through supervised fine-tuning concepts and preference learning, so you can reason about when customization is worth the effort

- Understanding how training data, tokenization choices, attention, and embeddings influence outputs, which helps you diagnose issues and communicate trade-offs to stakeholders

These capabilities can strengthen how you collaborate with engineering teams and vendors, and help you set more realistic expectations for what LLMs can and cannot do in your context.

{Anytime, anywhere.}

Request Information Now by completing the form below.

Large Language Model Fundamentals

| Select Payment Method | Cost |

|---|---|

| $3,750 | |